Imagine this…

A relative forwards you a video on WhatsApp. In it, a famous politician is saying something incredibly inflammatory. Your blood boils. Furious, you forward it to ten other groups.

Two days later, you discover the video wasn’t real. It was a Deepfake, cleverly crafted with AI.

How would you feel?

Probably angry. Maybe a little embarrassed that you became part of a lie.

Welcome to the new reality. A digital jungle where the line between truth and fiction is getting blurrier every single day. We had Photoshop before, but AI has injected this game with super-steroids, making it 1000 times more dangerous.

We used to say, “I won’t believe it until I see it with my own eyes.”

Now, the question is, “Can you even believe your own eyes?”

In this article, we’re going to talk about the “dark side” of AI that most people are afraid to discuss. But we’re not going to be afraid; we’re going to understand it. Because in this new jungle, the only way to survive is not through fear, but through awareness.

This article will give you the “magic glasses” to see through the lies generated by AI. We won’t talk about complex technology. Instead, we’ll discuss three simple, practical ‘mental models’ that will protect you and your family from this digital deception.

🛡️ Quick Pit Stop: Before we pick up our shields, it’s important to remember that technology isn’t good or evil; its use makes it so. To dive deeper into the ethical side of AI, be sure to read our article: AI Ethics for Dummies: 3 Simple Questions to Ask Before Using Any AI Tool.

The Real Problem: AI Isn’t the Villain, It’s Just a Super-Steroid

First, let’s identify the real villain in this story.

The villain isn’t AI.

Lying, spreading rumors, deceiving people… these things have been around for thousands of years. AI has simply injected these age-old human intentions (Greed, Power, Mischief) with a ‘super-steroid’.

Previously, it took a Photoshop expert hours to create a convincing fake image.

Today, a 15-year-old can give a one-line command to an AI and generate 10 realistic-looking images in 10 seconds.

So the problem isn’t the speed of technology; the problem is the speed of our thinking.

Our ability to critically evaluate information has fallen far behind the speed at which it can be generated.

We don’t need to fight AI. We need to upgrade our own ‘mental software’. We need to evolve from being passive, trusting users to active, skeptical digital citizens.

spot AI-generated fakes: Your 3-Second Mental Antivirus

You don’t need any fancy software. Your brain is the best antivirus in the world; it just needs to be trained.

From today, before you believe or share anything sensational online, run this quick, 3-second ‘Sanity Check’.

Check 1: The Source (Where did this come from?)

This is the first and most crucial question.

- Ask yourself: “Where did I get this information? Is it from a reputable news source (like the BBC, Reuters, AP), or from an anonymous WhatsApp group or a Facebook page with a weird name?”

- The Real-World Analogy: Imagine a stranger running down the street yelling, “The city is flooding!” Would you immediately believe them and start packing? No! You’d check the news, ask other people. Why are we so naive in the digital world?

- Actionable Tip: If the source is questionable, search the headline on Google and see if any major, trustworthy news agencies are also reporting it.

Check 2: The Emotion (How is this making me feel?)

This is a psychological trick. Misinformation and AI fakes attack one thing: your emotions.

- Ask yourself: “What am I feeling right now after seeing this post? Do I feel extreme anger? Extreme fear? Or extreme excitement?”

- Why this works: Fake news is intentionally designed to trigger your strongest emotions. Why? Because when we are highly emotional, our logical brain shuts down, and we hit the ‘share’ button without thinking.

- Actionable Tip: If a post makes you feel an intense emotion instantly, treat it as a red flag 🚩. Take a step back, take a deep breath, and go back to Check 1 (The Source).

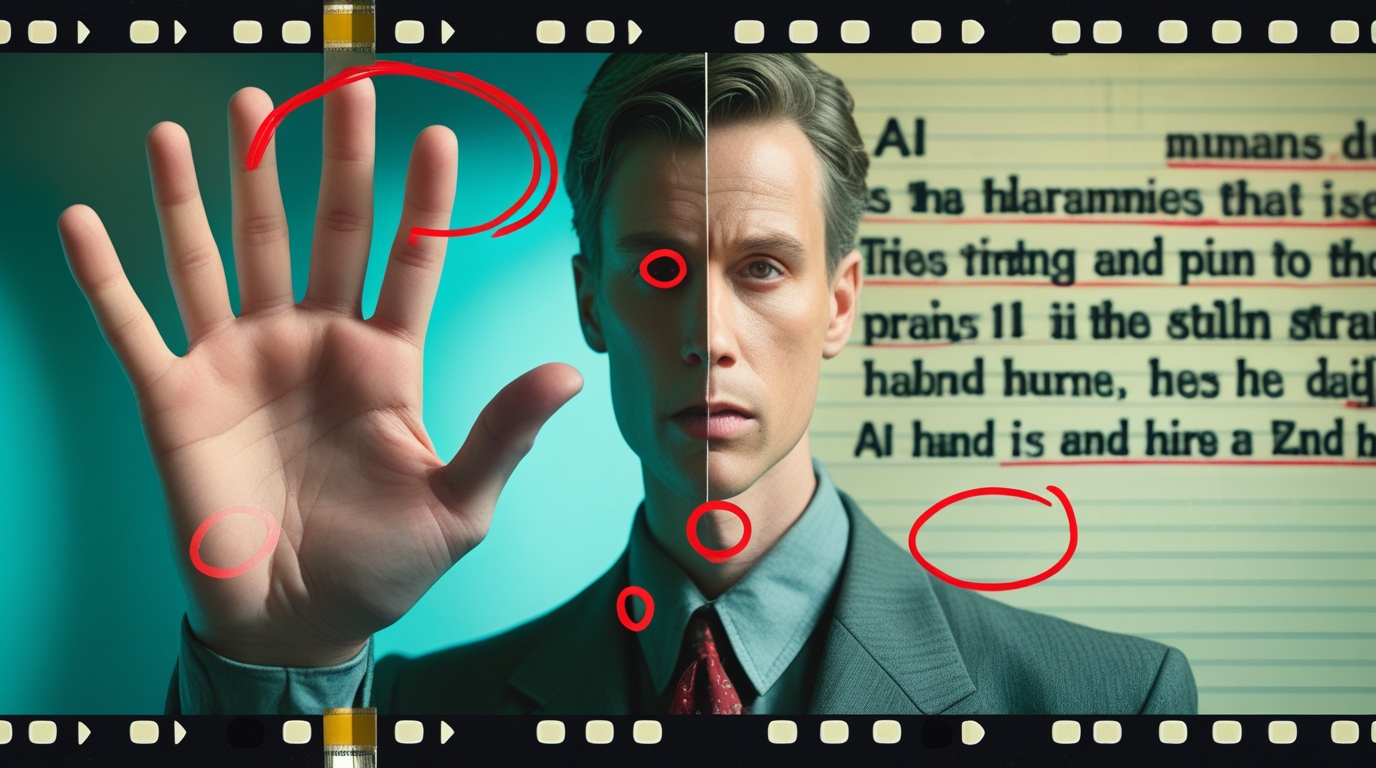

Check 3: The Details (Does anything look… weird?)

AI is getting smarter, but it’s not yet perfect. It often leaves behind small clues and mistakes that an aware person can catch.

- In Images:

- Check the hands and fingers: AI often struggles with hands. Does a person in the image have six fingers? Are the hands twisted unnaturally?

- Check the background: Is the text in the background gibberish or distorted? Are walls or poles bending in weird ways?

- Check the eyes and teeth: Do the eyes look lifeless or dead? Are the teeth a little too perfect?

- In Videos (Deepfakes):

- Blinking: Is the person blinking too little or in an unnatural way?

- Lip-sync: Do the lip movements not quite match the audio?

- Skin: Does the person’s skin look too smooth or waxy?

- In Text:

- Weird Phrasing: Does the writing style feel robotic or unnatural? Does it lack emotional depth?

🧠 Quick Recall: Remember how we talked about the true nature of AI? (AI Myths). It only copies patterns; it doesn’t “understand” the world like we do. That’s why it makes these small, context-less mistakes.

Your Responsibility: Don’t Panic, Just Pause.

In this new era, hitting the ‘share’ button is an act of great responsibility.

You are part of an ecosystem. One wrong ‘share’ from you can create a storm in someone’s life, incite riots, or ruin an innocent person’s reputation.

So the next time you see a shocking piece of news or a surprising image, don’t panic. Don’t get excited.

Just… pause.

Pause for a moment. And activate your 3-second ‘Human Firewall’.

It is our collective responsibility to prevent this digital jungle from becoming even more toxic.

Your Next Step

Today, you’ve faced the scary truth of AI. But you’ve also learned that you are not helpless. You possess the most powerful tool in the world: your own mind.

After understanding this darkness, it’s crucial to see what the future of AI and humanity will actually look like. It’s not a future of conflict, but of partnership.

In the final article of our 30-day journey, we will explore this positive and powerful future.

➡️ Click Here to Read: The Future is Not About AI vs. Humans, It’s About AI + Humans. Welcome to the “Co-Pilot” Era.

Let’s build a more aware and intelligent digital world, together.

What’s Your Experience?

Have you ever encountered an AI-generated fake? What was your experience like? Share your story in the comments below and let us know what other methods you use to avoid digital deception.